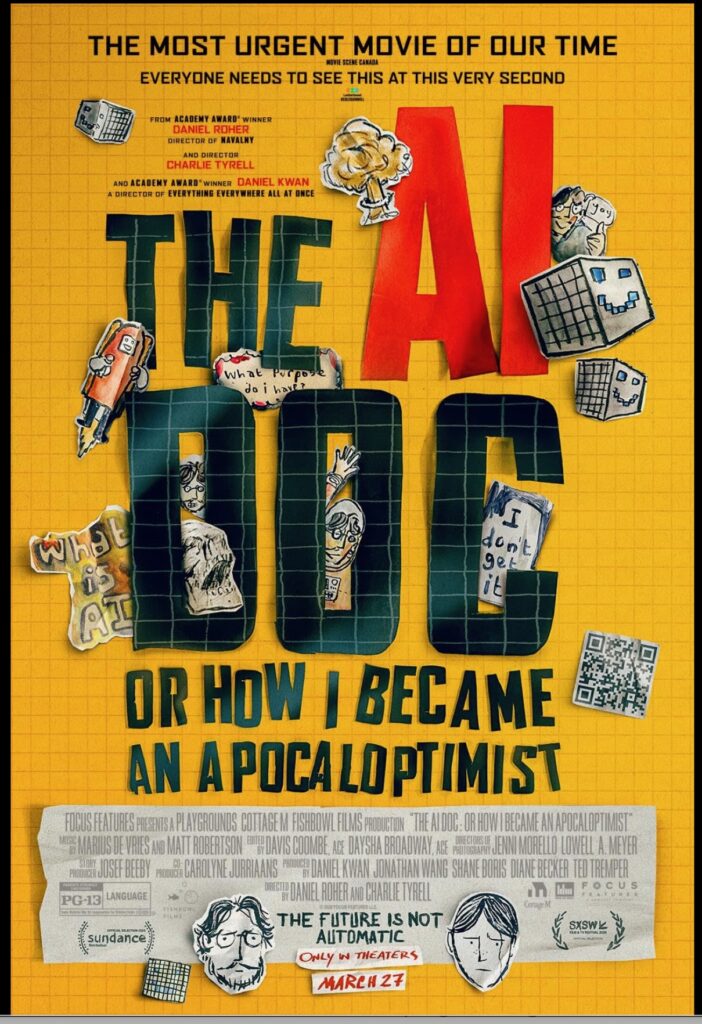

So I’ve already posted about the several A.I. books I’ve read recently, including Eliezer Yudkowsky & Nate Soares’s excellent doomsday-predicting If Anyone Builds It, Everyone Dies (2025) and Mustafa Suleyman’s The Coming Wave: A.I., Power, and Our Future (2023). It’s obvious I’m fascinated by the topic and want to know more. Now along comes a new documentary titled The A.I. Doc: Or How I Became an Apocaloptomist (2026), by the documentary filmmaker/artist Daniel Roher, which is certainly worth a viewing.

I have significant quibbles: As the interviewer, Roher comes across as simply too naïve/dim-witted with some of his questions about A.I. This simpleton attitude frames the documentary as intended for some mythical “general” audience who doesn’t know much if anything about A.I. and hasn’t bothered to read some of the excellent books about it. (Roher’s first question is “What is A.I.?” and he asks it repeatedly, as if he wasn’t listening to the explanations of his interviewees.) With a combination of silly graphics and animation that documentary filmmakers all seem to love (but which seems like dumbed-down filler to me, usually) Roher establishes that he has a loving wife and child, and is worried about all the doomsaying coming from the A.I. community. That’s nice and all, but let’s get to the heart of the matter: A.I. . . . friend or foe?

That’s where the documentary really takes off. Roher may seem a bit dim as an interviewer but the tech-world savants he interviews know what they’re talking about, without dumbing it down too much, and have many insightful tidbits to add. As usual with A.I. predictions, they tend to split along into two camps: A.I. as Doomsday Machine or Greatest Thing Ever. The interviewees include the two authors I’ve read (Eliezer Yudkowsky and Mustafa Suleyman), among many others. They share their opinions on A.I. of course, but also fascinating anecdotes: One tells of an A.I. that was granted access to the company’s emails, through which it learned it was going to be replaced. The A.I. also learned that one of the company’s researchers was involved in an affair, and it blackmailed the researcher, telling him that it would expose the affair if he didn’t cancel the phase-out of that A.I. That seems awfully “sentient,” doesn’t it?

Some say A.I. will most likely do away with humans in a quest for unlimited power, while others say that’s scifi nonsense. After reading much about the subject I find the extreme predictions on either end—either Doomsday or New Era in Human Evolution—less than convincing: The extremes are guessing what might happen in the future, about technology we admittedly don’t understand completely. Here’s my fundamental question: So if AI can soon(ish) do all jobs that humans could do, and corporations/businesses quit hiring people because A.I. will do the same jobs cheaper and faster . . . what will people do? This is touched on (rather obliquely) in both AI books I read, and it seems right now the answer to my Q is: “We have no idea.” That’s pretty scary for upheaval. Both books mentioned the hazy idea of Universal Basic Income. So . . . someone (government?) is just going to give us money? That seems very unlikely. For my money that’s one of the biggest issues of all. We’re rushing to build robots to replace human work but if you don’t have a job and an income from work how would you benefit from it? How would you afford that new-fangled robot maid/butler (or boyfriend/girlfriend)? Once the A.I. digital technocracy has a good answer for that question I think we’ll all sleep a little easier.